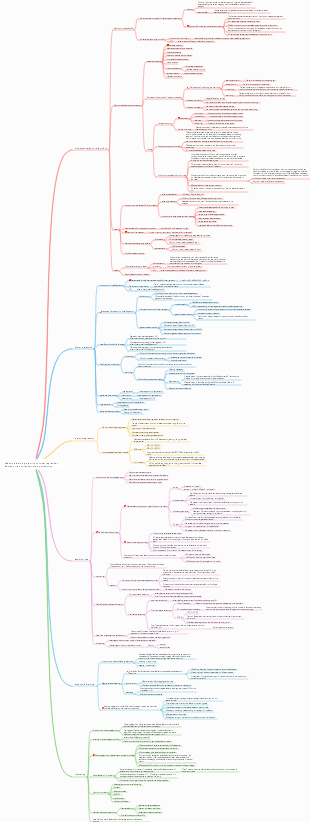

MindMap Gallery Basic regression algorithm for machine learning

- 44

Basic regression algorithm for machine learning

It summarizes the basic regression algorithms in machine learning, such as basic linear regression, recursive regression, regularized linear regression, sparse linear regression Lasso, linear basis function regression, singular value decomposition, error decomposition of regression learning, etc.

Edited at 2023-02-15 23:14:30- ハワイ・オアフ島 5 日間モデルプラン マインドマップテンプレート

本テンプレートは、日本人に人気の海外リゾート地「ハワイ・オアフ島」を対象とした、5 日間の充実したモデル旅行プランを体系化したマインドマップです。初めてハワイを訪れる旅行者、リピーター、家族連れやカップルなど、多様なニーズに対応するため、旅行基本情報・持ち物チェックリスト・5 日間詳細スケジュール・オプションプラン・事前準備情報の 5 つの軸で構成されています。対象読者は日本からオアフ島へ旅行を計画中の 20〜50 代の旅行者であり、成果指標としては、情報の網羅性(渡航手続きから現地体験まで必要な項目が過不足なく含まれているか)、実用性(移動時間や費用、予約のタイミングなどの正確さ)、体験の充実度(自然・文化・アクティビティ・食事のバランス)を測定します。 ユーザーニーズ分析では、渡航準備・現地移動・体験プラン・安全情報の 4 領域を掘り下げます。渡航準備においては、ESTA 申請、飛行機の予約、為替、パスポートの有効期限、海外旅行保険など、事前に整えるべき情報が不足していると計画が難しくなります。求められる価値としては、渡航に必要な手続きの流れ、必要な持ち物リスト、季節別の服装アドバイス、現地で使えるアプリや連絡先などが考えられます。現地移動では、ワイキキ内の徒歩・バス移動、レンタカーの利用方法、空港からのアクセス、交通機関のルール(右側通行など)が主な関心事です。悩みとしては、バスの路線が分からない、レンタカーの予約手続きが不安、現地での移動時間の目安が分からないなどが挙げられます。体験プランでは、ビーチでのんびり、ダイヤモンドヘッドのハイキング、ノースショアでのサーフィン、ハレイワタウン散策、ポリネシアン・カルチャーセンターでの文化体験など、オアフ島の魅力を網羅したプランが求められます。悩みとしては、限られた日数で主要スポットを効率よく回れない、予算に合ったアクティビティの選び方が分からない、人気のレストランやショップの情報が不足しているなどが挙げられます。安全情報では、ハワイ特有の注意点(紫外線対策、海での安全ルール、治安情報)、緊急時の連絡先、現地でのトラブル対応方法など、旅行者が不安に感じる点を整理することが重要です。 5 日間のモデルコースでは、各日のテーマを明確に設定し、体験のバランスを考慮しています。1 日目は「到着日・ワイキキ慣らし」として、ホノルル国際空港に到着後、ワイキキのホテルにチェックインし、夕方からワイキキビーチでのんびりしたり、夜は地元料理を味わったりして、ハワイの雰囲気に慣れる行程です。2 日目は「自然体験&ショッピング」として、午前中にダイヤモンドヘッドのハイキングに挑戦し、午後はアラモアナセンターやワイキキでショッピングを楽しみ、夜はハワイアン・ルアウショーを鑑賞する行程です。3 日目は「歴史文化巡り」として、イオラニ宮殿やパールハーバー(真珠湾)を訪れてハワイの歴史に触れ、午後はダウンタウンホノルルで街歩きをし、夜はインターナショナルマーケットプレイスで食事や買い物を楽しむ行程です。4 日目は「北海岸&大自然体験」として、オプションでノースショアへ向かい、ハレイワタウンで散策したり、サーフィンを体験したり、美しいビーチでのんびり過ごす行程で、夜はワイキキに戻って食事を楽しみます。5 日目は「最終日・思い出作り」として、午前中にワイキキビーチでの最後の散策や、お土産を買いに街を巡り、午後は空港へ移動して帰国する行程です。各日には、おすすめの時間帯、混雑しにくいタイミング、予約が必要なアクティビティの情報などを付け加え、実際に旅行する際の参考になるよう工夫しています。 また、テンプレートには持ち物チェックリストも含まれており、パスポート・ESTA、海外旅行保険証書、現金・クレジットカード、日焼け止め・帽子・サングラス、歩きやすい靴、薬、充電器など、海外旅行に必要なアイテムをリストアップしています。さらに、事前準備情報として、ネット環境の確保、現地で使えるアプリ、緊急連絡先、季節別の服装アドバイスなども記載し、旅行者の不安を解消するようサポートします。 EdrawMind のマインドマップ機能を活用することで、ユーザーは自身の旅行スタイルに合わせて行程を追加・削除したり、好みのアクティビティをハイライトしたりすることができます。例えば、ゆっくりリゾートを楽しみたい方はショッピングやハイキングの時間を減らしてビーチでの時間を増やしたり、アクティブに過ごしたい方はノースショアでのサーフィンやダイビングを追加したりするなど、カスタマイズも自由自在です。このテンプレートは、オアフ島の旅行計画を立てる際の基盤として活用することを想定しており、主要な情報が一目で分かるよう整理されているため、初めてハワイを

- 奈良 1 泊 2 日旅行プラン マインドマップテンプレート

本テンプレートは、古都・奈良の世界遺産、鹿とのふれあい、歴史的な雰囲気を存分に楽しむための 1 泊 2 日旅行プランを体系化したマインドマップです。修学旅行や短期文化旅行、週末の小旅行に人気の奈良を対象に、イメージ・種類・交通・宿泊の 4 つの基本軸を設け、2 日間の具体的な行程を時系列で整理しています。対象読者は大阪・京都在住の 20〜40 代の一人旅・カップル・家族連れ、初めて奈良を訪れる旅行者、世界遺産や日本文化に興味のある層であり、成果指標としては、行程の網羅性(主要スポットが過不足なく含まれているか)、実用性(移動時間や混雑情報の正確さ)、体験の充実度(鹿とのふれあい・文化体験の満足度)を測定します。 ユーザーニーズ分析では、行程・体験・交通・注意点の 4 領域を掘り下げます。行程においては、「東大寺」「春日大社」「奈良国立博物館」といった世界遺産の回り方、「奈良公園」での鹿とのふれあい、「奈良町」の古い町並み散策がユーザーの関心事となります。悩みとしては、限られた時間で主要スポットを効率よく回れない、鹿との接し方が分からない、徒歩移動の負担が心配などが挙げられます。求められる価値としては、時間帯別のおすすめルート、鹿と安全に接するためのマナー説明、無理のない徒歩移動のための休憩ポイント案内が考えられます。体験面では、鹿せんべいの購入場所や与え方、春日大社の灯篭や御朱印の魅力、奈良町のカフェや伝統工芸体験など、現地でしか味わえない体験情報が求められます。交通においては、奈良市内のバス路線や一日券の情報、主要スポット間の徒歩時間、雨天時の移動手段など、事前に知っておくべき情報が不足していると計画が難しくなります。注意点では、天候対策(夏の暑さや冬の寒さ)、スケジュールのゆとり作り、写真撮影のルールやマナー、ゴミの持ち帰りなど、旅行者が見落としがちな点を整理することが重要です。 行程の中でも、特に人気の高いスポットには詳細な情報を盛り込んでいます。「東大寺」は世界遺産に登録されており、奈良時代に建立された日本を代表する寺院で、世界最大級の木造建築物である大仏殿や、高さ約 15 メートルの盧舎那仏(奈良の大仏)が有名です。事前にコインロッカーに荷物を預けて身軽になってから訪れることで、ゆっくりと境内を散策できるほか、大仏殿の柱の穴をくぐると「厄除けになる」という言い伝えもあり、多くの観光客が体験しています。「奈良公園」は東大寺や春日大社を含む広大な公園で、約 1,300 頭の野生の鹿が自由に生息しており、鹿せんべいを使って鹿とふれあうことができます。ただし、鹿は野生動物であるため、エサの与え方や触れ方には注意が必要で、事前にルールを確認しておくことが推奨されます。「春日大社」は朱色の社殿と美しい灯篭が特徴的な世界遺産で、参道には 3,000 基を超える石灯篭が並び、神聖な雰囲気を醸し出しています。特に夜間にライトアップされた灯篭は幻想的で、写真撮影にも人気です。 1 泊 2 日のモデルコースでは、初日に東大寺・奈良公園・春日大社を巡り、夜は奈良町の古い町並みを散策して地元料理を味わう行程を提案しています。二日目には、若草山から奈良の街並みを一望した後、興福寺や奈良国立博物館を訪れ、奈良町で伝統工芸体験やカフェ巡りを楽しんでから帰路に就く流れとなっています。各スポットには、徒歩時間や混雑しにくい時間帯、おすすめの食事処などの情報を付け加え、実際に旅行する際の参考になるよう工夫しています。また、旅行の注意点として、スケジュールは体調に合わせて無理のないペースで調整すること、天候に合わせて水分補給や防寒・防暑対策を徹底すること、神社仏閣での写真撮影ルールを守ることなどを記載し、安全で快適な旅行をサポートします。 EdrawMind のマインドマップ機能を活用することで、ユーザーは自身の旅行スタイルに合わせて行程を追加・削除したり、好みのスポットをハイライトしたりすることができます。一人旅向けには静かなカフェ巡りを追加したり、家族連れ向けには鹿とのふれあい体験を充実させたりするなど、カスタマイズも自由自在です。このテンプレートは、奈良の旅行計画を立てる際の基盤として活用することを想定しており、主要な情報が一目で分かるよう整理されているため、初めて奈良を訪れる方でも安心して旅行を楽しむことができます。

- 箱根 週末旅行ガイド

本テンプレートは、東京から約90分でアクセス可能な温泉・富士山・美術館が融合したリゾート地「箱根」の週末旅行ガイドを体系化したマインドマップです。カップルや家族連れに人気の週末旅行先として、交通アクセス、観光スポット、名物料理の3軸で構成され、効率的な旅行計画と満足度の高い体験を実現することを目的としています。対象読者は東京在住の20〜40代のカップル・家族連れ、初めて箱根を訪れる旅行者、週末の小旅行を計画中の層であり、成果指標としては、情報の網羅性(必要な項目が過不足なく含まれているか)、実用性(実際の移動時間や料金の正確さ)、満足度(モデルプランの再現性)を測定します。 ユーザーニーズ分析では、交通アクセス、観光スポット、グルメの3領域を掘り下げます。交通アクセスにおいては、東京からの行き方(小田急ロマンスカー約85分・指定席、新宿→箱根湯本、普通電車約2時間・乗換2回)、箱根内の移動手段(登山電車・バス・ケーブルカー・ロープウェイ)、お得な周遊券(箱根フリーパス・2日券)の情報が不足していると計画が難しくなります。求められる価値としては、交通機関別の所要時間・料金・乗換回数を比較した表、周遊券の特典内容(主要観光施設の割引)と購入場所、移動手段ごとのメリット・デメリットが考えられます。観光スポットでは、「箱根ガラスの森美術館」「クモ箱根(早雲山駅)」「芦ノ湖の夕暮れ遊覧船」などが代表的です。悩みとしては、美術館や自然スポットが多すぎて選べない、夕暮れ時の遊覧船のベストタイミングが分からない、写真映えするスポットを知りたいなどが挙げられます。価値ある情報として、おすすめスポットの特徴と所要時間、夕暮れ時の撮影ポイント、カップル向け・家族向けの選別基準を提供します。名物料理では、「黒たまご(大涌谷)」「温泉豆腐」などが代表的です。悩みは、どこで何を食べれば良いか分からない、観光地価格に見合う価値があるか、アレルギーや食事制限への対応などです。求められる価値として、名物料理の特徴とおすすめ店舗、価格帯、食べるタイミング(例:黒たまごは大涌谷観光の合間に)を整理します。 カップルにおすすめスポットとして、「箱根ガラスの森美術館」はユネスコ世界遺産(※正確には箱根地域全体がジオパークに認定されていますが、イメージとして)の美しい庭園とガラス作品が魅力です。写真はイメージですが、実際の訪日客にも人気のスポットです。名物料理のセクションでは、「黒たまご」は大涌谷の火山活動を利用して茹でられた卵で、殻が黒くなるのが特徴です。伝統的な名物料理として、食べると寿命が延びると言われています。「温泉豆腐」も地元の温泉を利用した料理で、なめらかな食感が特徴です。これらの情報をマップ上で可視化し、移動ルートと組み合わせることで、無駄のない観光計画が立てられます。 成功するための具体施策としては、主要スポットを時系列で結んだ「1泊2日モデルコース」を提供する(例:1日目:新宿→箱根湯本→登山電車→強羅→大涌谷→芦ノ湖遊覧船→宿泊、2日目:箱根ガラスの森美術館→箱根湯本→帰京)、各スポットの「混雑予想時間帯」と「穴場時間帯」をデータで示す(例:芦ノ湖遊覧船は夕暮れ時が混雑するが、その分景色は絶景)、名物料理を食べられる店舗の「営業時間・定休日・予約可否」をリスト化する、の3点が有効です。よくある失敗とその回避策としては、移動手段の乗換えが複雑で迷ってしまうケースでは箱根フリーパスの活用と事前のルート確認を推奨すること、観光スポットの滞在時間を見誤って計画が詰まりすぎるケースでは余裕を持ったスケジューリングと優先順位付けをアドバイスすること、天候によって富士山が見えない場合の代替プラン(雨天でも楽しめる美術館や温泉施設)を用意しておくことが有効です。本テンプレートは、週末旅行ガイドのコンテンツを計画・評価する際の基盤として活用することを想定しています。

Basic regression algorithm for machine learning

- ハワイ・オアフ島 5 日間モデルプラン マインドマップテンプレート

本テンプレートは、日本人に人気の海外リゾート地「ハワイ・オアフ島」を対象とした、5 日間の充実したモデル旅行プランを体系化したマインドマップです。初めてハワイを訪れる旅行者、リピーター、家族連れやカップルなど、多様なニーズに対応するため、旅行基本情報・持ち物チェックリスト・5 日間詳細スケジュール・オプションプラン・事前準備情報の 5 つの軸で構成されています。対象読者は日本からオアフ島へ旅行を計画中の 20〜50 代の旅行者であり、成果指標としては、情報の網羅性(渡航手続きから現地体験まで必要な項目が過不足なく含まれているか)、実用性(移動時間や費用、予約のタイミングなどの正確さ)、体験の充実度(自然・文化・アクティビティ・食事のバランス)を測定します。 ユーザーニーズ分析では、渡航準備・現地移動・体験プラン・安全情報の 4 領域を掘り下げます。渡航準備においては、ESTA 申請、飛行機の予約、為替、パスポートの有効期限、海外旅行保険など、事前に整えるべき情報が不足していると計画が難しくなります。求められる価値としては、渡航に必要な手続きの流れ、必要な持ち物リスト、季節別の服装アドバイス、現地で使えるアプリや連絡先などが考えられます。現地移動では、ワイキキ内の徒歩・バス移動、レンタカーの利用方法、空港からのアクセス、交通機関のルール(右側通行など)が主な関心事です。悩みとしては、バスの路線が分からない、レンタカーの予約手続きが不安、現地での移動時間の目安が分からないなどが挙げられます。体験プランでは、ビーチでのんびり、ダイヤモンドヘッドのハイキング、ノースショアでのサーフィン、ハレイワタウン散策、ポリネシアン・カルチャーセンターでの文化体験など、オアフ島の魅力を網羅したプランが求められます。悩みとしては、限られた日数で主要スポットを効率よく回れない、予算に合ったアクティビティの選び方が分からない、人気のレストランやショップの情報が不足しているなどが挙げられます。安全情報では、ハワイ特有の注意点(紫外線対策、海での安全ルール、治安情報)、緊急時の連絡先、現地でのトラブル対応方法など、旅行者が不安に感じる点を整理することが重要です。 5 日間のモデルコースでは、各日のテーマを明確に設定し、体験のバランスを考慮しています。1 日目は「到着日・ワイキキ慣らし」として、ホノルル国際空港に到着後、ワイキキのホテルにチェックインし、夕方からワイキキビーチでのんびりしたり、夜は地元料理を味わったりして、ハワイの雰囲気に慣れる行程です。2 日目は「自然体験&ショッピング」として、午前中にダイヤモンドヘッドのハイキングに挑戦し、午後はアラモアナセンターやワイキキでショッピングを楽しみ、夜はハワイアン・ルアウショーを鑑賞する行程です。3 日目は「歴史文化巡り」として、イオラニ宮殿やパールハーバー(真珠湾)を訪れてハワイの歴史に触れ、午後はダウンタウンホノルルで街歩きをし、夜はインターナショナルマーケットプレイスで食事や買い物を楽しむ行程です。4 日目は「北海岸&大自然体験」として、オプションでノースショアへ向かい、ハレイワタウンで散策したり、サーフィンを体験したり、美しいビーチでのんびり過ごす行程で、夜はワイキキに戻って食事を楽しみます。5 日目は「最終日・思い出作り」として、午前中にワイキキビーチでの最後の散策や、お土産を買いに街を巡り、午後は空港へ移動して帰国する行程です。各日には、おすすめの時間帯、混雑しにくいタイミング、予約が必要なアクティビティの情報などを付け加え、実際に旅行する際の参考になるよう工夫しています。 また、テンプレートには持ち物チェックリストも含まれており、パスポート・ESTA、海外旅行保険証書、現金・クレジットカード、日焼け止め・帽子・サングラス、歩きやすい靴、薬、充電器など、海外旅行に必要なアイテムをリストアップしています。さらに、事前準備情報として、ネット環境の確保、現地で使えるアプリ、緊急連絡先、季節別の服装アドバイスなども記載し、旅行者の不安を解消するようサポートします。 EdrawMind のマインドマップ機能を活用することで、ユーザーは自身の旅行スタイルに合わせて行程を追加・削除したり、好みのアクティビティをハイライトしたりすることができます。例えば、ゆっくりリゾートを楽しみたい方はショッピングやハイキングの時間を減らしてビーチでの時間を増やしたり、アクティブに過ごしたい方はノースショアでのサーフィンやダイビングを追加したりするなど、カスタマイズも自由自在です。このテンプレートは、オアフ島の旅行計画を立てる際の基盤として活用することを想定しており、主要な情報が一目で分かるよう整理されているため、初めてハワイを

- 奈良 1 泊 2 日旅行プラン マインドマップテンプレート

本テンプレートは、古都・奈良の世界遺産、鹿とのふれあい、歴史的な雰囲気を存分に楽しむための 1 泊 2 日旅行プランを体系化したマインドマップです。修学旅行や短期文化旅行、週末の小旅行に人気の奈良を対象に、イメージ・種類・交通・宿泊の 4 つの基本軸を設け、2 日間の具体的な行程を時系列で整理しています。対象読者は大阪・京都在住の 20〜40 代の一人旅・カップル・家族連れ、初めて奈良を訪れる旅行者、世界遺産や日本文化に興味のある層であり、成果指標としては、行程の網羅性(主要スポットが過不足なく含まれているか)、実用性(移動時間や混雑情報の正確さ)、体験の充実度(鹿とのふれあい・文化体験の満足度)を測定します。 ユーザーニーズ分析では、行程・体験・交通・注意点の 4 領域を掘り下げます。行程においては、「東大寺」「春日大社」「奈良国立博物館」といった世界遺産の回り方、「奈良公園」での鹿とのふれあい、「奈良町」の古い町並み散策がユーザーの関心事となります。悩みとしては、限られた時間で主要スポットを効率よく回れない、鹿との接し方が分からない、徒歩移動の負担が心配などが挙げられます。求められる価値としては、時間帯別のおすすめルート、鹿と安全に接するためのマナー説明、無理のない徒歩移動のための休憩ポイント案内が考えられます。体験面では、鹿せんべいの購入場所や与え方、春日大社の灯篭や御朱印の魅力、奈良町のカフェや伝統工芸体験など、現地でしか味わえない体験情報が求められます。交通においては、奈良市内のバス路線や一日券の情報、主要スポット間の徒歩時間、雨天時の移動手段など、事前に知っておくべき情報が不足していると計画が難しくなります。注意点では、天候対策(夏の暑さや冬の寒さ)、スケジュールのゆとり作り、写真撮影のルールやマナー、ゴミの持ち帰りなど、旅行者が見落としがちな点を整理することが重要です。 行程の中でも、特に人気の高いスポットには詳細な情報を盛り込んでいます。「東大寺」は世界遺産に登録されており、奈良時代に建立された日本を代表する寺院で、世界最大級の木造建築物である大仏殿や、高さ約 15 メートルの盧舎那仏(奈良の大仏)が有名です。事前にコインロッカーに荷物を預けて身軽になってから訪れることで、ゆっくりと境内を散策できるほか、大仏殿の柱の穴をくぐると「厄除けになる」という言い伝えもあり、多くの観光客が体験しています。「奈良公園」は東大寺や春日大社を含む広大な公園で、約 1,300 頭の野生の鹿が自由に生息しており、鹿せんべいを使って鹿とふれあうことができます。ただし、鹿は野生動物であるため、エサの与え方や触れ方には注意が必要で、事前にルールを確認しておくことが推奨されます。「春日大社」は朱色の社殿と美しい灯篭が特徴的な世界遺産で、参道には 3,000 基を超える石灯篭が並び、神聖な雰囲気を醸し出しています。特に夜間にライトアップされた灯篭は幻想的で、写真撮影にも人気です。 1 泊 2 日のモデルコースでは、初日に東大寺・奈良公園・春日大社を巡り、夜は奈良町の古い町並みを散策して地元料理を味わう行程を提案しています。二日目には、若草山から奈良の街並みを一望した後、興福寺や奈良国立博物館を訪れ、奈良町で伝統工芸体験やカフェ巡りを楽しんでから帰路に就く流れとなっています。各スポットには、徒歩時間や混雑しにくい時間帯、おすすめの食事処などの情報を付け加え、実際に旅行する際の参考になるよう工夫しています。また、旅行の注意点として、スケジュールは体調に合わせて無理のないペースで調整すること、天候に合わせて水分補給や防寒・防暑対策を徹底すること、神社仏閣での写真撮影ルールを守ることなどを記載し、安全で快適な旅行をサポートします。 EdrawMind のマインドマップ機能を活用することで、ユーザーは自身の旅行スタイルに合わせて行程を追加・削除したり、好みのスポットをハイライトしたりすることができます。一人旅向けには静かなカフェ巡りを追加したり、家族連れ向けには鹿とのふれあい体験を充実させたりするなど、カスタマイズも自由自在です。このテンプレートは、奈良の旅行計画を立てる際の基盤として活用することを想定しており、主要な情報が一目で分かるよう整理されているため、初めて奈良を訪れる方でも安心して旅行を楽しむことができます。

- 箱根 週末旅行ガイド

本テンプレートは、東京から約90分でアクセス可能な温泉・富士山・美術館が融合したリゾート地「箱根」の週末旅行ガイドを体系化したマインドマップです。カップルや家族連れに人気の週末旅行先として、交通アクセス、観光スポット、名物料理の3軸で構成され、効率的な旅行計画と満足度の高い体験を実現することを目的としています。対象読者は東京在住の20〜40代のカップル・家族連れ、初めて箱根を訪れる旅行者、週末の小旅行を計画中の層であり、成果指標としては、情報の網羅性(必要な項目が過不足なく含まれているか)、実用性(実際の移動時間や料金の正確さ)、満足度(モデルプランの再現性)を測定します。 ユーザーニーズ分析では、交通アクセス、観光スポット、グルメの3領域を掘り下げます。交通アクセスにおいては、東京からの行き方(小田急ロマンスカー約85分・指定席、新宿→箱根湯本、普通電車約2時間・乗換2回)、箱根内の移動手段(登山電車・バス・ケーブルカー・ロープウェイ)、お得な周遊券(箱根フリーパス・2日券)の情報が不足していると計画が難しくなります。求められる価値としては、交通機関別の所要時間・料金・乗換回数を比較した表、周遊券の特典内容(主要観光施設の割引)と購入場所、移動手段ごとのメリット・デメリットが考えられます。観光スポットでは、「箱根ガラスの森美術館」「クモ箱根(早雲山駅)」「芦ノ湖の夕暮れ遊覧船」などが代表的です。悩みとしては、美術館や自然スポットが多すぎて選べない、夕暮れ時の遊覧船のベストタイミングが分からない、写真映えするスポットを知りたいなどが挙げられます。価値ある情報として、おすすめスポットの特徴と所要時間、夕暮れ時の撮影ポイント、カップル向け・家族向けの選別基準を提供します。名物料理では、「黒たまご(大涌谷)」「温泉豆腐」などが代表的です。悩みは、どこで何を食べれば良いか分からない、観光地価格に見合う価値があるか、アレルギーや食事制限への対応などです。求められる価値として、名物料理の特徴とおすすめ店舗、価格帯、食べるタイミング(例:黒たまごは大涌谷観光の合間に)を整理します。 カップルにおすすめスポットとして、「箱根ガラスの森美術館」はユネスコ世界遺産(※正確には箱根地域全体がジオパークに認定されていますが、イメージとして)の美しい庭園とガラス作品が魅力です。写真はイメージですが、実際の訪日客にも人気のスポットです。名物料理のセクションでは、「黒たまご」は大涌谷の火山活動を利用して茹でられた卵で、殻が黒くなるのが特徴です。伝統的な名物料理として、食べると寿命が延びると言われています。「温泉豆腐」も地元の温泉を利用した料理で、なめらかな食感が特徴です。これらの情報をマップ上で可視化し、移動ルートと組み合わせることで、無駄のない観光計画が立てられます。 成功するための具体施策としては、主要スポットを時系列で結んだ「1泊2日モデルコース」を提供する(例:1日目:新宿→箱根湯本→登山電車→強羅→大涌谷→芦ノ湖遊覧船→宿泊、2日目:箱根ガラスの森美術館→箱根湯本→帰京)、各スポットの「混雑予想時間帯」と「穴場時間帯」をデータで示す(例:芦ノ湖遊覧船は夕暮れ時が混雑するが、その分景色は絶景)、名物料理を食べられる店舗の「営業時間・定休日・予約可否」をリスト化する、の3点が有効です。よくある失敗とその回避策としては、移動手段の乗換えが複雑で迷ってしまうケースでは箱根フリーパスの活用と事前のルート確認を推奨すること、観光スポットの滞在時間を見誤って計画が詰まりすぎるケースでは余裕を持ったスケジューリングと優先順位付けをアドバイスすること、天候によって富士山が見えない場合の代替プラン(雨天でも楽しめる美術館や温泉施設)を用意しておくことが有効です。本テンプレートは、週末旅行ガイドのコンテンツを計画・評価する際の基盤として活用することを想定しています。

- Recommended to you

- Outline

machine learning Basic regression algorithm

regression learning

Features

supervised learning

Data set with label y

learning process

The process of determining model parameters w

predict or extrapolate

The process of calculating regression output by substituting new inputs

linear regression

basic linear regression

target linear function

Error Gaussian distribution assumption

There is a discrepancy between the output value and the labeled value

Assuming that the model output is the expected value, the probability function of the random variable (labeled value) yi is

Since the samples are independently and identically distributed, the joint probability density function of all labeled values is

Likelihood function to find optimal parameters (least squares LS solution)

log likelihood function

error sum of squares

maximum likelihood solution

Mean square error test formula

Recursive learning for linear regression

Targeted issues

The scale of the problem is too large and it is difficult to solve the matrix

gradient descent algorithm

Take all samples to calculate the average gradient

average gradient

Recursion formula

Stochastic gradient descent SGD algorithm (LMS)

Take random samples to calculate the gradient

stochastic gradient

Recursion formula

Mini-batch SGD algorithm

Take a small batch of samples to calculate the average gradient

average gradient

Recursion formula

regularized linear regression

Targeted issues

The condition number of the matrix is very large and the numerical stability is not good.

The nature of the large condition number of the problem

Some column vectors of a matrix are proportional or approximately proportional

There are redundant weight coefficients and overfitting occurs.

Solution

Should "reduce the number of model parameters" or "regularize the model parameters"

Regularized objective function

Error sum of squares J(w) hyperparameter λ constraining parameter vector w

form

Regularized least squares LS solution

Regularized Linear Regression Probability Interpretation

The prior distribution of the weight coefficient vector w is the Bayesian "maximum posterior probability estimate" MAP under the Gaussian distribution

Gradient recursion algorithm (small batch stochastic gradient descent method SGD as an example)

Multiple output (output vector y) linear regression

Targeted issues

The output is a vector y instead of a scalar y

Error sum of squares objective function J(W)

Least squares LS solution

Sparse Linear Regression Lasso

norm of the regularization term

Norm p>1

None of the solution coordinates are 0, and the solution is not sparse.

Norm p=1

Most of the solution coordinates are 0, the solutions are sparse, and the processing is relatively easy.

Norm p<1

Most of the solution coordinates are 0, the solutions are sparse, and the processing is difficult.

Lasso problem

content

For the problem of minimizing the error sum of squares function, a constraint ||w||1<t is imposed

regularization expression

Lasso’s cyclic coordinate descent algorithm

preprocessing

Zero-mean the data matrix X columns and normalize them to Z

Lasso's solution in single variable case

Lasso solution

Generalization of Lasso solution in multi-variable cases

Cyclic coordinate descent method CCD

First determine one of the parameters wj

Calculate the parameters that minimize the sum of squared errors

At this time, other parameters w are not optimal values, so the calculation result of wj is only an estimate.

Loop calculation

The same idea is used to calculate other parameters in a loop until the parameter estimates converge.

Part of the residual value ri(j) replaces yi

Mathematically consistent with univariate

parameter estimates

Lasso’s LAR algorithm

Be applicable

Solve the sparse regression problem under 1-norm constraints

Corresponding to the regularized regression problem

Classification

λ=0

Standard least squares problem

The larger λ

The sparser the model parameter solution w vector is, the sparser it is

linear basis function regression

basis function

regression model

data matrix

regression coefficient solution

singular value decomposition

pseudoinverse

SVD decomposition

Regression coefficient model solution

Error decomposition for regression learning

error function

error expectation

Model

theoretical best model

Learning model

error decomposition

Model complexity and error decomposition

The model is simple

Large deviation, small variance

The model is complex

Small deviation, large variance

Appropriate model complexity needs to be chosen